Three pains that look like mysterious inference regressions after you touch routing

1) Silent default model drift across providers. OpenClaw may accept a friendly model nickname in configuration while each backend resolves names differently. Cloud vendors expose long model identifiers with version suffixes. Ollama uses local tags such as latest that move when someone runs a pull overnight. The gateway process stays healthy, doctor passes because files parse, yet completions truncate or refuse because the effective model string no longer matches an entitled API route or a pulled local weight set. Fix this with an explicit inventory table: canonical model identifiers per provider, pinned tags for Ollama in production, and a change log entry whenever any identifier changes. Treat renames with the same rigor you treat database migrations.

2) Quota blindness until users notice quality collapse. Rate limits rarely announce themselves with a polite banner inside chat transcripts. Some APIs return structured errors with retry-after hints; others degrade through latency spikes first. Teams that only watch application logs miss dashboard counters that already show ninety percent consumption before a hard stop. Hybrid routing without a rehearsed fallback turns the first real quota event into an unplanned architecture meeting. Establish per-route token budgets, alarms at seventy five and ninety percent of monthly envelopes where vendors expose them, and a written decision whether local inference may substitute for premium cloud models when budgets exhaust.

3) Misreading signals because doctor is static while failures are dynamic. openclaw doctor validates configuration shape, reachable files, and many static dependencies. It does not replace a live probe that your chosen cloud endpoint still authorizes the key, nor does it prove Ollama currently holds a model resident in memory under load. Operators who stop after doctor see green checks while the next user request fails on transport or empty responses. Pair doctor with curl against the gateway HTTP port, archive health JSON on a schedule, and correlate anomalies with timestamps in structured logs. This ordering matters because jumping straight into verbose logs without a clean health snapshot wastes time comparing unrelated noise.

Together these pains explain why two engineers on the same version see divergent behavior: their model strings, quota postures, and diagnostic order differ. Standardize routing documentation per host role, not per individual laptop.

Decision matrix: cloud APIs versus Ollama versus hybrid inference routing

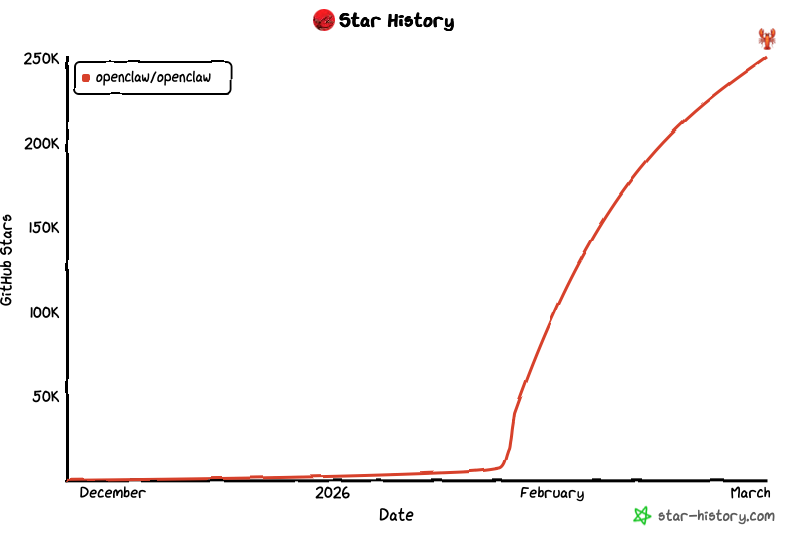

Use this matrix in design reviews and incident retrospectives. Numbers are representative planning anchors for small and medium gateways in 2026; adjust with your vendor SKUs and Apple silicon generation.

| Strategy | Best for | Primary risk | Minimum controls |

|---|---|---|---|

| Cloud APIs only | Teams needing frontier models without local hardware | Quota ceilings, provider incidents, policy changes | Key rotation runbooks, budget alarms, explicit model allow lists |

| Ollama local only | Air-gapped workflows, privacy-sensitive prompts, predictable offline demos | RAM pressure, slower iteration on new weights, disk churn for large pulls | Reserve at least sixteen gigabytes RAM headroom on M-series hosts for seven billion parameter class models at modest context, SSD with hundreds of gigabytes free for multiple tags |

| Hybrid: cloud primary, Ollama fallback | Production gateways that must survive quota spikes | Complexity, dual observability, possible quality mismatch when falling back | Automated health checks, documented downgrade messaging, latency budgets per route |

| Hybrid: Ollama primary, cloud burst | Cost-sensitive teams with occasional premium needs | Accidental cloud spend if burst triggers misfire | Hard caps on burst routes, separate API keys with low limits, monthly reconciliation |

When strategies tie, prefer explicit routing tables and archived health JSON over ad hoc edits during incidents. Hybrid routing pays for itself when failover is rehearsed quarterly and when operators agree which user-facing messages appear when local models answer instead of cloud ones. Align any routing policy with your installation baseline: container users must inject the same environment into both the gateway and any sidecar that spawns Ollama; npm users must verify PATH resolution for ollama binaries under launchd or systemd.

For remote teams comparing Linux cloud virtual machines with macOS hosts, remember that Ollama on Apple silicon often delivers better performance per watt for the same parameter class, but only when thermal and power conditions stay stable. Laptops that sleep remain a poor primary site for hybrid routing because wake cycles desynchronize health checks.

Prerequisites: Node, Ollama, RAM, disk, and a versioned model inventory

Before you declare hybrid routing production ready, capture prerequisites in a short file checked into infrastructure repositories. Node: record the major version required by your OpenClaw release line, whether you run the CLI globally or inside a project toolchain, and the output of which openclaw so support can reproduce resolution issues. Pin lockfiles where applicable and avoid mixing multiple Node majors on one gateway user account. Ollama: document install channel, whether ollama serve runs as a logged-in user agent or a dedicated service, and which environment variables adjust listeners if you cannot use defaults. Pull models deliberately with ollama pull and note exact tags in the inventory, not only latest.

RAM: plan headroom beyond raw model weights. Conversational agents hold context windows, tool outputs, and transient buffers. A practical starting point for many seven billion parameter setups on Apple silicon is to keep at least eight to twelve gigabytes free for the model footprint plus several gigabytes for concurrent requests and operating system cache, but measure with real prompts because retrieval augmented flows inflate memory. Disk: quantized artifacts still consume tens of gigabytes across multiple tags; reserve at least two hundred gigabytes on fast SSD for experimentation without fighting space pressure during pulls.

Pair hardware baselines with network expectations. Hybrid routing amplifies sensitivity to DNS, TLS interception, and proxy timeouts. Document internal names for Ollama if the gateway connects via loopback versus a Unix socket versus a LAN address. Document outbound firewall rules for cloud APIs, including which egress IPs your vendor expects when using allow lists. If you terminate TLS at a reverse proxy in front of port 18789, write both the public URL and the internal health probe path so orchestrators do not flap.

Finally, rehearse cold start: stop Ollama, restart the gateway, confirm the first request after boot still resolves models and credentials without manual intervention. Cold start gaps are a frequent source of morning outages when nightly maintenance reboots machines. Cross-check these prerequisites against the multiplatform deployment FAQ when your control plane spans macOS and Linux.

Provider switching, routing rules, and configuration pitfalls that break hybrid setups

Routing changes should behave like infrastructure changes with a maintenance window, even when config reload feels instant. First, separate traffic classes: interactive chat, background summarization, tool-heavy chains, and administrative commands may deserve different providers or latency budgets. Second, avoid ambiguous defaults at the root of configuration that silently inherit different models per subsystem. Third, ensure environment variables that carry API keys actually reach the process that performs outbound calls; container users often mount secrets correctly for the gateway but forget sidecars that host local tools.

Common pitfalls include mixing temperature and max token defaults that one provider rejects quietly, leaving stale base URLs after a vendor region migration, and pointing Ollama at a remote host without tuning keepalive for long tool calls. Another pitfall is double compression or rewriting inside reverse proxies that corrupt streaming responses. Test streaming and non-streaming modes explicitly because middleware bugs appear in one path only.

When switching providers under pressure, change one variable at a time: model identifier, API key, or network path. Capture health JSON before and after each step. If you must roll back, restore the previous configuration artifact from version control rather than hand editing from memory. Tie routing documentation to the same discipline described in gateway operations so channel bridges and inference backends stay aligned during incidents.

Example diagnostic sequence for hybrid routing health

openclaw status

curl -sS -m 5 http://127.0.0.1:18789/health || echo "gateway health probe failed"

openclaw doctor

openclaw health --json > /tmp/openclaw-health-hybrid-$(date +%Y%m%d%H%M).json

ollama serve

ollama pull llama3.1:8b

Run ollama serve only on the host that owns local inference; adapt model tags to your approved list. Keep the logical order: prove process ownership, prove HTTP health, validate static configuration, archive structured health, then manage local model lifecycle.

Failover discipline: reading doctor versus logs versus provider dashboards during incidents

Failover is not only switching routes; it is proving the switch succeeded before users retry blindly. Start with openclaw status to confirm the gateway process is the one you think owns configuration files and environment. Follow with an HTTP probe on 127.0.0.1:18789 or your proxied equivalent so you distinguish local listener failures from upstream inference failures. Then run openclaw doctor to catch static misconfiguration that appeared after edits. Capture openclaw health --json into a timestamped file so you can diff structured fields across incidents without scrolling screenshots.

Only after that ladder should you dive into verbose logs. Logs excel at sequencing events and correlating user identifiers with failures, but they are noisy when you lack a baseline. Filter by subsystem: gateway HTTP, provider client, tool execution, and Ollama daemon. When cloud APIs throttle, compare your logs with vendor dashboards and billing views; sometimes the gateway is healthy while the account is simply out of credits. When Ollama fails, check whether the model was evicted due to memory pressure or whether concurrent requests saturated context limits.

Establish a short incident template: time window, route class affected, primary and secondary providers, exact model identifiers, health JSON attachments, and whether failover occurred automatically or manually. This template accelerates postmortems and prevents repeating the same misconfiguration next month. If you operate beside SFTP-based artifact promotion, schedule gateway maintenance windows so file transfers and inference tests do not contend for disk and CPU.

For long running sessions, watch proxy read timeouts: tool-heavy chains can exceed default sixty second limits even when models remain healthy. Increase timeouts deliberately and document the new ceiling so security reviews understand the tradeoff. Rehearse quota exhaustion quarterly by simulating a disabled API key in staging and confirming local fallback completes within your latency budget.

FAQ, summary, and when a hosted remote Mac fits hybrid OpenClaw gateways

FAQ highlights: Teams ask whether to pin Ollama tags in production; yes, treat tags like dependency versions. They ask how often to archive health JSON; daily in production pools and after every configuration change in staging. They ask whether hybrid routing complicates compliance; it can, because data may leave the building on cloud routes while staying local on others, so document data classification per traffic class.

- Doctor clean but empty completions: verify model strings, API key scopes, and Ollama residency under load, not only static files.

- Intermittent 429 from cloud: implement exponential backoff where supported, and route discretionary workloads to local models when policy allows.

- Gateway healthy but channels quiet: return to bridge configuration as described in gateway operations articles; inference can work while messaging transport fails.

Summary: Reliable hybrid routing combines pinned model inventories, quota awareness, a fixed diagnostic ladder, and rehearsed failover that treats local and cloud backends as peers with different failure modes.

Limitation: Pure cloud routing leaves you exposed to quotas and provider incidents you cannot patch locally. Pure local routing caps quality and freshness when your hardware budget is finite and when you need frontier reasoning for niche tasks.

SFTPMAC angle: A hosted remote Mac offers stable power, continuous reachability, and colocation with SFTP or rsync delivery paths many teams already use for Apple ecosystem artifacts. When your OpenClaw gateway must stay online beside the same audited upload endpoints your CI pipeline trusts, moving off a sleeping laptop reduces desynchronized health checks and permission drift without sacrificing native toolchains. Standardize on infrastructure built for twenty-four seven operation when hybrid inference is part of production, not a weekend experiment.

Should hybrid routing be enabled by default for new gateways?

Enable it only after you document fallback models, user-visible downgrade behavior, and monitoring; otherwise complexity exceeds benefit.

How much log retention is enough?

Keep at least fourteen days on hot storage for incident correlation; longer when compliance requires reconstruction of routing decisions.

Does Linux cloud replace a Mac for Ollama?

Often yes for inference alone; choose Mac when your toolchain, signing, or file workflows assume macOS paths and Apple silicon performance per watt.

Need a stable Mac host for OpenClaw hybrid routing next to managed file delivery? Compare SFTPMAC plans and baseline your gateway there.