When a custom web_search provider is the adult choice

Default bundled search integrations optimize for time-to-hello-world. They rarely encode your data residency story, invoice caps, or the requirement that every agent egress IP is allowlisted by a nervous security team. Moving to a custom provider means you deliberately choose an HTTP contract, attach authentication that your vault owns, and attach observability that finance can read.

Regulated shops often mandate an internal retrieval service that already enforces ACLs on documents. Pointing tools.web.search at that service reuses policy instead of inventing a parallel shadow index. The trade-off is engineering: you must keep schemas stable, publish SLOs, and handle partial outages without silently falling back to the public internet unless policy allows.

Cost-aware teams hit vendor 429s during model retries long before they hit token limits. Custom routing lets you shard queries across keys, apply per-team budgets, or throttle automations that spam search every tool call. Document those budgets beside CPU and RAM because they behave like hidden compute.

Availability planning must include corporate forward proxies and split-horizon DNS. A gateway that resolves vendor.example from a laptop may fail on a server that forces traffic through an inspection proxy with custom roots. Validate from the same POSIX user, same environment files, and same cgroup as production.

Operational symmetry matters: if you already run status to gateway to doctor to logs for Telegram flakes, reuse it for search. Different symptoms, same discipline prevents random wiki pages that contradict each other during incidents.

Finally, treat configuration edits like code: pull requests, reviewers who understand SSRF, and automated JSON schema checks in CI. The production SSRF article explains why outbound HTTP deserves the same suspicion as inbound webhooks.

Runbooks should state who approves provider URL changes, which maintenance window allows gateway restarts, and how to verify rollback using the snapshot discipline from MCP plugin upgrade notes. Without owners, search becomes the integration everyone uses and nobody maintains until quotas burn.

Multi-region deployments need explicit answers about whether each gateway cluster talks to the same search endpoint or region-local mirrors. Divergent DNS answers cause flaky comparisons where Tokyo succeeds while Dublin fails despite identical JSON, which wastes hours unless you log effective endpoints per request.

When models switch between providers mid-incident, search traffic may spike as tools re-enumerate capabilities. Cap simultaneous tool-discovery calls so a model outage does not become a search outage through accidental amplification.

Pain decomposition

Pain 1: Secret sprawl. API keys embedded in JSON that also lives in dotfile sync tools leak faster than SSH keys. Prefer environment indirection and short-lived tokens where your identity provider allows.

Pain 2: SSRF through search parameters. If the model can supply arbitrary query strings and your backend naively fetches first hits, you recreated SSRF. Enforce server-side allowlists and strip internal URL patterns.

Pain 3: Doctor false negatives. Doctor validates local consistency, not remote vendor health. Pair it with synthetic canary queries and external blackbox monitors.

Pain 4: Hot reload versus cold restart. As with MCP lifecycle issues, some builds apply JSON partially until the gateway process recycles. When in doubt, restart cleanly and measure child counts.

Pain 5: Confusing search with scraping. Bulk page retrieval belongs behind dedicated fetch tooling with caching and robots awareness, not a quick search hack that amplifies traffic.

Provider decision matrix

| Backend style | Strength | Cost driver | Security note | Best for |

|---|---|---|---|---|

| Vendor JSON API | Fastest compliance story if already approved | Per-query billing plus burst penalties | Rotate keys quarterly; monitor 401 spikes | Teams with existing enterprise search |

| Internal retrieval proxy | ACL-aware snippets | Engineering toil and index freshness | Must block file:// and metadata SSRF | Zero-trust document access |

| Self-hosted SearXNG-style | Cost control and air-gap options | Ops time and hardware | Harden admin interfaces separately | Lab and regulated enclaves |

| Plugin shim process | Custom transforms without forking core | Another binary to patch | Treat like MCP regarding upgrades | Legacy SOAP or mainframe bridges |

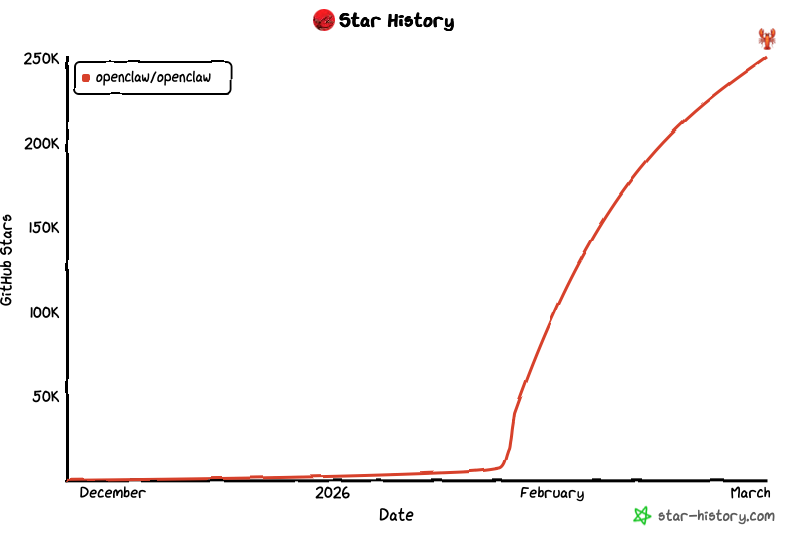

Pick one primary backend per environment, document exceptions, and re-evaluate after major OpenClaw upgrades because schema keys occasionally shift.

Configuration skeleton for tools.web.search

{

"tools": {

"web": {

"search": {

"provider": "customHttp",

"baseUrl": "https://search.corp.example/api/v1/query",

"auth": {

"type": "bearer",

"tokenEnv": "CORP_SEARCH_TOKEN"

},

"timeoutMs": 12000,

"maxResults": 8,

"allowedHosts": ["search.corp.example"]

}

}

}

}

Replace field names with those supported by your release; treat this block as structural guidance, not a promise of literal keys. Always load secrets from environment variables referenced indirectly, never inline bearer strings that land in shell history or screenshots.

Step 1 snapshot openclaw.json and note gateway user. Step 2 deploy the HTTP service with mTLS or network policies as required. Step 3 apply JSON, restart gateway, run openclaw doctor. Step 4 send a canary query and capture logs at info without leaving tokens in log lines. Step 5 enable dashboards for latency and HTTP codes before rolling out to all automations.

Quantitative guardrails that prevent surprise invoices

Instrument queries per hour, per automation, and per API key. Set soft alerts at seventy percent of contractual daily caps and hard stops that disable noncritical agents first. Pair query volume charts with model retry counts because backoff loops multiply HTTP calls silently.

Track p50 and p95 latency separately; tail latency often reflects DNS or TLS handshake issues invisible in averages. Compare gateway host clocks to avoid skewed log correlation across regions.

Record payload sizes returned to the model. Oversized snippets inflate context costs downstream even when search feels cheap. Truncate aggressively at the proxy if policy allows.

Maintain an incident notebook with exact curl reproducers using redacted headers so on-call does not guess whether the failure is OpenClaw, the proxy, or the vendor.

Quarterly, reconcile finance invoices with internal metrics; discrepancies often reveal shadow API keys in forgotten staging gateways.

Capture baseline error budgets: acceptable percentage of 5xx per day, maximum consecutive failures before paging, and median recovery time after vendor incidents. Postmortems for search outages should attach gateway logs, proxy logs, and the exact JSON diff that preceded the event.

When automations run overnight, schedule heavier indexing or batch jobs so they do not contend with interactive user queries on the same API key. Separate keys for automation versus humans simplifies attribution and prevents noisy retries from blocking critical workflows.

Document maximum snippet length and forbidden MIME types at the proxy so operators can reject surprising responses before they reach model context. That guardrail costs little CPU compared to downstream token spend.

Outbound security and SSRF boundaries

Search is outbound HTTP, yet it participates in the same trust story as inbound webhooks. If your backend dereferences URLs found in snippets, you must validate schemes and hosts before any follow-up fetch. Align thinking with SSRF hardening guidance even though traffic direction differs.

Host allowlists at the OpenClaw configuration layer are necessary but not sufficient; enforce again at the corporate egress firewall so a bad config cannot wander. Log blocked attempts with automation identifiers to trace which prompt pattern triggered them.

Separate production and staging tokens physically; shared keys cause staging experiments to exhaust production quotas. Use distinct baseUrl values per environment to reduce human error.

When TLS inspection proxies re-sign certificates, import their roots into the trust store of the service user, not only admin accounts. Doctor may still pass while queries fail with opaque TLS errors.

Document which automations may call search unsupervised versus those that require human approval channels described in HITL articles. The boundary is policy, not technology alone.

How MCP, web_fetch, and web_search differ in practice

MCP servers extend tools through subprocess or HTTP transports with independent versioning; see stdio leak guidance for lifecycle traps. They excel when tools need local filesystem access or bespoke binaries.

web_search should stay a thin HTTP integration with predictable quotas. Overloading it to fetch arbitrary URLs turns it into an unmonitored crawler.

web_fetch style capabilities, where present, should carry caching, robots.txt respect, and explicit size limits. If your stack collapses fetch and search, document the combined threat model.

After any change, cold restart, then run doctor and a channel probe as described in gateway operations. TLS edge issues belong in reverse proxy notes.

Glossary for search operations

tools.web.search names the JSON subtree that declares HTTP search integration for many OpenClaw distributions.

Custom provider means any backend you operate or contract beyond the default demo integration.

Bearer token is an Authorization header secret that must never appear in argv listings or public logs.

Canary query is a fixed test phrase used post-deploy to validate latency and auth without touching user data.

Cold restart fully stops the gateway process before starting again to flush cached integration state.

Doctor scans configuration and environment for known footguns; it is not a live vendor monitor.

SSRF is server-side request forgery risk when user-controlled strings become URLs.

Allowlist enumerates permitted hostnames or CIDRs for egress.

429 signals rate limiting; handle with backoff and alerting.

Split horizon DNS resolves the same name differently inside corporate networks versus the public internet.

Forward proxy mediates outbound HTTP with inspection and policy enforcement.

mTLS adds client certificates for mutual authentication between gateway and search API.

Quota caps queries or spend per day; treat it like CPU throttling.

Retry storm happens when models or clients loop on transient errors, multiplying HTTP calls.

Context inflation grows token usage when oversized snippets return to the model.

Staging parity requires identical integration shapes as production, differing only in endpoints and keys.

JSON schema CI lints configuration before merge to catch typos early.

Observability cardinality stays manageable by labeling metrics per environment, not per chat session.

Incident reproducer is a curl command with redacted secrets that proves where failure starts.

Hosted remote Mac is managed Apple hardware suitable for gateways plus SFTP-driven build delivery.

Egress policy is the written rule set describing which hosts and ports automation may call.

Token rotation replaces API secrets on a calendar rhythm without service downtime when dual-key schemes exist.

Schema drift appears when upgrades rename JSON keys; CI detection prevents silent misconfiguration.

Blackbox probe is an external synthetic monitor that queries through the public path, not only localhost.

Automation budget caps concurrent agents that may call search during incidents.

Proxy auth covers upstream username-password or SPNEGO layers that gateways must supply to reach the internet.

Log redaction strips tokens and PII before centralized logging ships to third parties.

Change advisory is a lightweight CAB note for JSON edits that affect egress or quotas.

FAQ and hosted Mac bridge

Should search share keys with LLM providers?

No. Separate keys simplify rotation, billing attribution, and blast-radius isolation.

Do I need IPv6 egress planning?

If your vendor prefers IPv6 or your host enables it by default, verify firewall symmetry or searches will flap mysteriously.

What if our search API is on-prem only?

Use the same mesh or VPN paths you trust for SSH; document MTU and DNS dependencies beside the OpenClaw notes in install guides.

Summary: Custom web_search is configuration plus outbound security plus observability. Treat it like any production integration, not a hidden browser tab.

Limits: Self-managed gateways stack proxies, tokens, disks, and Apple-friendly build hosts. SFTPMAC hosted remote Mac packages uptime and ingress patterns teams already use for artifacts, which pairs cleanly with long-running OpenClaw gateways that must stay online while search policies evolve.

Keep reading order consistent: gateway ladder, then MCP lifecycle, then TLS edge, then this search guide, then SSRF hardening. Consistency beats novelty during outages.

Review SFTPMAC plans when you need stable macOS gateways alongside compliant file delivery.